Identification Error

We live in a time when the visual capture of the human face has never been more ubiquitous—from the seemingly innocuous popularization of Korean skin care and the rise in plastic surgery due to front-facing camera selfies, to Hong Kong protestors’ evasions of facial recognition technology.

Biometrics, borders, and policing have merged, as facial recognition is deployed with unprecedented frequency. In the wake of 9/11, US government investment in defense and security exploded, especially around biometric research and development. And in that explosion, the face has become a central feature in a seemingly new iteration of surveillance: from “biometrics … for border crossings and visas; the proliferation of invasive surveillance cameras in urban settings …; biometric marketing that automates personalized advertisements …; the vast array of facial identification and verification platforms found in social media …, to the iPhone’s RecognizeMe application that uses face scanning to unlock phones.”1 In fact, this amalgamation of commercial, state, and military interests has now redefined the very meaning of the face itself—as a mode of governance, a quantitative code, a template, and a standardized form of measure and management.

Yet despite this context, in 2017, a Chinese woman in Nanjing was offered a refund after her colleague was able to unlock her two iPhones, which had been equipped with a Face ID passcode and configured to her specific facial biometrics. Apple boasted that the statistical average incidence of an erroneous unlock was one in one million, though that number was lower for people who were related. The two women in question were not.2

For Asian Americans, this example demonstrates the remarkable elasticity and longevity of Asian racialization. As the long histories of racialization in the US have taught us, one of the primary tropes of Asian racialization is misrecognition, misidentification, and indistinguishability. That can mean a person being mistaken for a different Asian person, or it can take the form of a more profound misrecognition: as yellow peril, wartime enemy, model minority, harbinger of disease, terrorist, spy—or simply too alien, unassimilable, and robotic to be recognized under the category of the Human.

One might view this contextual history as an extrapolation or an over-conflation of a seemingly innocuous anecdote. After all, isn’t it just a failure of Apple’s specific facial recognition technology? Or more incisively, isn’t this just another instance of technological racism, as most of us with an awareness of technology might understand it to be? Well, yes. It is technological racism, and more. I begin with the seemingly small instance of an iPhone misidentification deliberately, in order to link a recent technological problem to a long, hidden history of US racialization and surveillance, one that is indebted to the surveillance and misrecognition of Asian faces since the era of the Chinese Exclusion Act.

The contemporary challenge is the seemingly straightforward, yet troubling problem of errors within facial recognition algorithms. In 2019, a United States government study conducted by the National Institute of Standards and Technology issued findings that were intended to inform policymakers and help software developers refine the performance of their facial recognition algorithms.3 In testing the majority of the facial recognition technology industry, the institute found that most of these algorithms falsely identified Black and Asian faces ten to a hundred times more often than white faces, and ten times more often for women of color than for men of color.

The consequences of misidentification can be dire—Detroit’s Project Green Light, which equips police with facial recognition tools used to automate and expand state surveillance, has the distinction of having enabled the first widely publicized wrongful imprisonment by facial recognition algorithm.4

The rates of error for people of color have typically been attributed to a lack of training set data, and the solution often offered is more equitable inclusion and expansion. However, this simplistic solution ignores the longer history of racialization and its relationship to technology. These types of race-centered misrecognitions are not glitches but, in fact, a defining feature of digital life, and constitutive of the race-making project. Thus, this essay looks askance at these instances of misrecognition, at the binary logics of accuracy and error, and at the idea that the problem may be solved through inclusive/good, exclusive/bad training data sets.

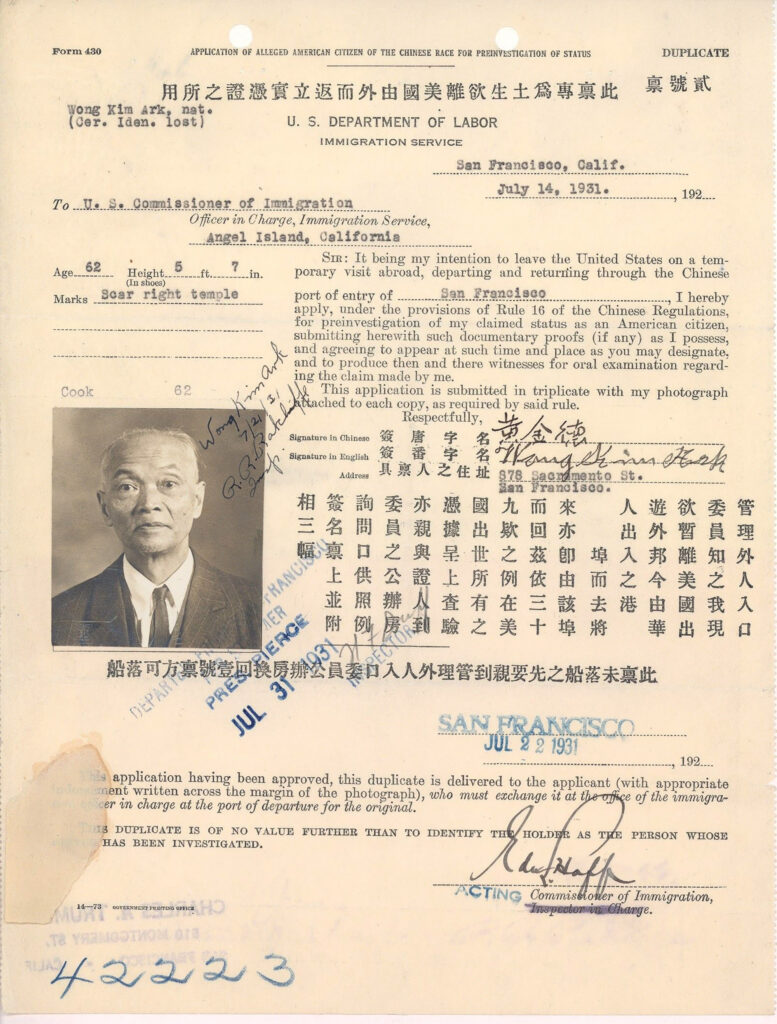

Form 430: Application of Alleged American Citizen of the Chinese Race for Preinvestigation of Status of Wong Kim Ark, July 13, 1931. RECORDS OF THE IMMIGRATION AND NATURALIZATION SERVICE, RECORD GROUP 85. NATIONAL ARCHIVES IDENTIFIER: 18556185.

What happens when we instead reframe the glitches in these technologies not simply as mistakes to be resolved with more data, but as racial legacies that continue to haunt us and form our surveillance apparatus? Indeed, these tendencies may be understood as endemic to the very system of facial surveillance and to the racial histories of data governance. This reframing pushes us to linger in critical histories that are often disavowed, yet continue to haunt facial recognition technology.

Social violence, then, is the uncredited ghostwriter of this technology, a palimpsest of events that appear as errors but are in fact race’s perpetual presence.

I use haunting here as more than a convenient metaphor for race’s often underwritten presence within this technology. Race is an elusive, ghostly category, neither fact nor mere fiction, that nonetheless relentlessly haunts certain bodies and not others.5 In facial recognition technology, misrecognitions appear as a type of ghostwriting from the past that refuses to stay invisible. I argue that certain elements in the US history of Asian racialization effectively serve as ghosts who write their presence into the “errors” in present-day technology. Haunting is “an animated state in which a repressed or unresolved social violence is making itself known.”6 Social violence, then, is the uncredited ghostwriter of this technology, a palimpsest of events that appear as errors but are in fact race’s perpetual presence.

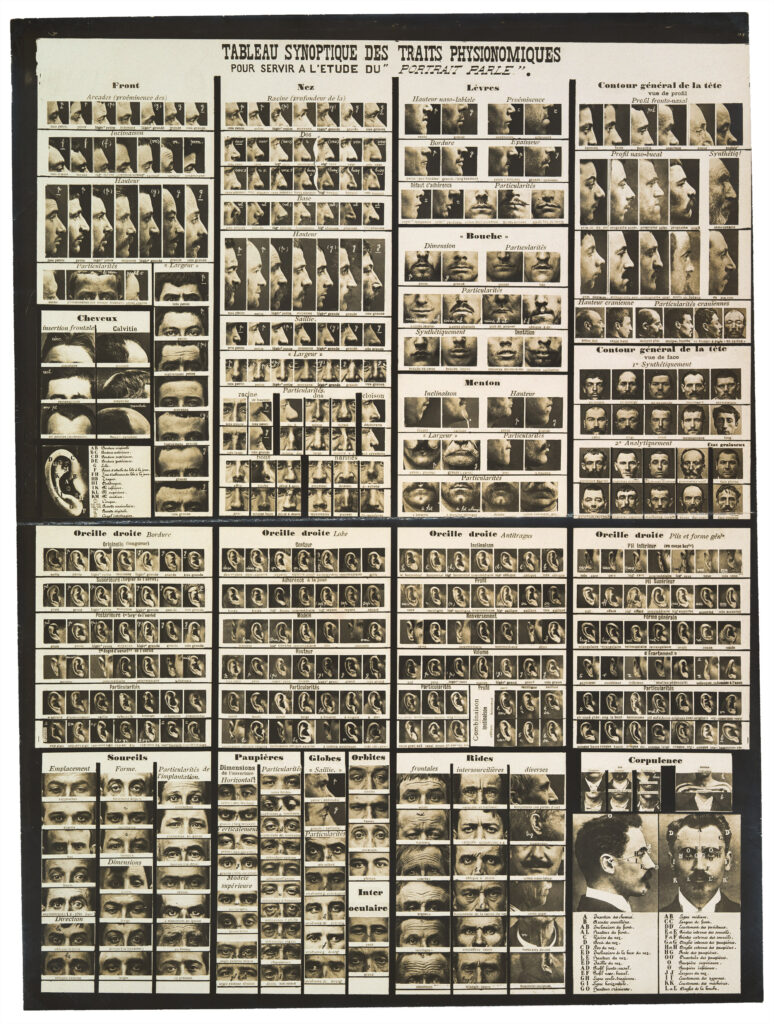

Alphonse Bertillon’s Synoptic table of physiological traits (Tableau synoptique des traits physionomiques: pour servir a l’étude de “portait parlé”), ca. 1909. METROPOLITAN MUSEUM OF ART, TWENTIETH-CENTURY PHOTOGRAPHY FUND, 2009.

Critical histories of facial recognition algorithms often excavate how this technology is surreptitiously linked to the history of photography and its role in legitimating typology and race science. Developing alongside the criminological sciences of the nineteenth century, photography inaugurated new relations between visual media and conceptions of evidence and objectivity. It was out of the photograph’s ability to document, measure, and compare bodies that the practice of sorting them into human types arose. Photography instantiated new taxonomies of normal and other, familiar and exotic, proper and improper. This muddied the constructs concerning subjective and objective knowledge, creating systems of surveillance and power, and casting the body as a repository of knowledge that recalibrated race in new ways.

Most histories attentive to these dynamics make note of French criminologist and anthropologist Alphonse Bertillon and his use of anthropometry, the measurement of human bodies for a new system of identification to be used by law enforcement. His implementation of rogue galleries and mug shots was used to provide coveted data for eugenics-based ideas of criminality as read on and through the body, weaponizing the face specifically for state surveillance and control.7 But contemporary facial recognition technology is also haunted by other biometric pasts in the US, closely relating portraiture with racial regulation.

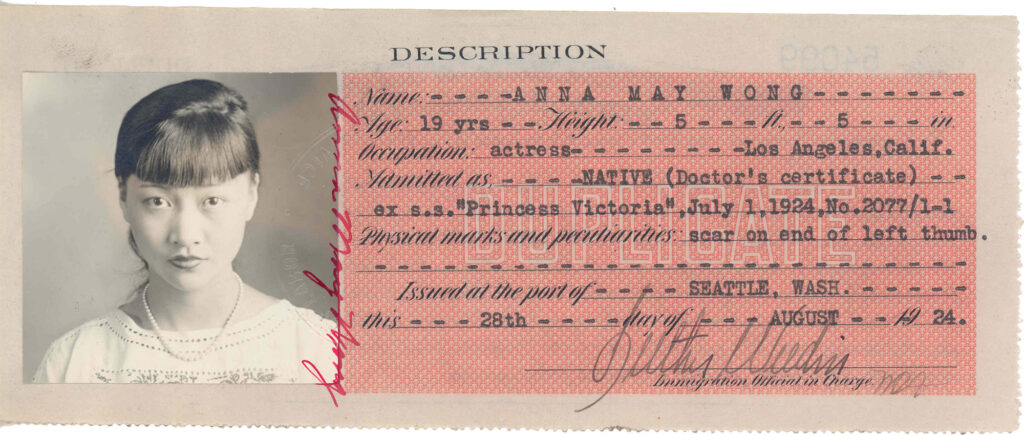

Chinese Exclusion laws marked the formal emergence of visual documentation regulation into immigration policy. Passed in 1875 and 1882, the Page Act and the Chinese Exclusion Act effectively excluded almost all Chinese immigration to the US, reflecting widespread American beliefs about Chinese unassimilability and racial inferiority. Chinese immigrants were the first group to be excluded from the US solely on the basis of race and class. And they simultaneously became the first group to be photographed by the federal immigration bureau, which adopted extensive photographic documentation in an attempt to regulate Chinese immigration. Stated more directly, the bureau implemented photographic identification for the first time for the Chinese exclusively, effectively establishing the Asian face as the unacknowledged yet foundational subject of state regulatory surveillance and data gathering within the US.8

Certificate of Identity for legendary actor Anna May Wong. RECORDS OF THE IMMIGRATION AND NATURALIZATION SERVICE, RECORD GROUP 85. NATIONAL ARCHIVES IDENTIFIER: 5720287.

The irony of implementing photographic identification to stifle Chinese immigration was that would-be Chinese immigrants often utilized to their own advantage the racialized logics that cast the Chinese as both inscrutable (emotionally unreadable) and indistinguishable (they all look the same). Since the only Chinese who were allowed to enter the United States were those who already had a relative there, those wishing to enter could create fake family histories and forged documents, with accompanying photographs. Dubbed “paper sons,” these Chinese immigrants weaponized and thus, embraced the very logics of racist anti-Asian misrecognition for their own migratory aspirations. Effectively these paper sons were able to exploit and strategically bypass the very system that sought to exclude them. Thus, the Asian face functions as a sociotechnical formation, an origin of bioinformatic control, and, simultaneously or perhaps epiphenomenally, an evasion of it.

Excavating the tactics that Chinese immigrants used in this context does not legitimate or excuse indistinguishability as valid racial thinking. Rather, in thinking about misrecognition differently, we can locate the strategies, negotiations, and creative technological practices that racialized subjects used against, and through, state surveillance and racial exclusion in order to recircuit its disciplinary logics. In sum, the history of facial imaging is haunted by racial surveillance and the policing of immigrants. The glitches, errors, and misrecognitions in facial recognition are signals of anti-Asian racial violence—ghostwriters at the margins of history and in the foundations of technology. These ghosts are reminders that misrecognition is intrinsic to this technology rather than an errant exception.

- Zach Blas, “Escaping the Face: Biometric Facial Recognition and the Facial Weaponization Suite,” Media-N CAA Conference Edition 9, no. 2 (2013). ↩︎

- https://www.huffpost.com/entry/iphone-face-recognition-double_n_5a332cbce4b0ff955ad17d50 ↩︎

- https://www.nist.gov/news-events/news/2019/12/nist-study-evaluates-effects-race-age-sex-face-recognition-software ↩︎

- Kashmir Hill, “Wrongfully Accused by an Algorithm,” New York Times, June 24, 2020, https://www.nytimes.com/2020/06/24/technology/facial-recognition-arrest.html ↩︎

- Katrina Karkazis and Rebecca Jordan-Young, “Sensing Race as a Ghost Variable in Science, Technology, and Medicine,” Science, Technology, & Human Values 45, no. 5 (2020): 763. ↩︎

- Avery Gordon, Ghostly Matters: Haunting and the Sociological Imagination (University of Minnesota Press, 2008), xvi. ↩︎

- Allan Sekula, “The Body and the Archive,” October 39 (1986): 18–19, https://doi.org/10.2307/778312 ↩︎

- Anna Pegler Gordon, In Sight of America: Photography and the Development of US Immigration Policy (University of California Press, 2009). ↩︎